Wikipedia defines a prototype as an early sample, model, or release of a product built to test a concept or process or to act as a thing to be replicated or learned from. In fact, I have created many types of prototypes (visual design, system design, web interface, hardward, paper prototypes etc.) over the past year, this page lists a selection of them.

Visual prototypes

|

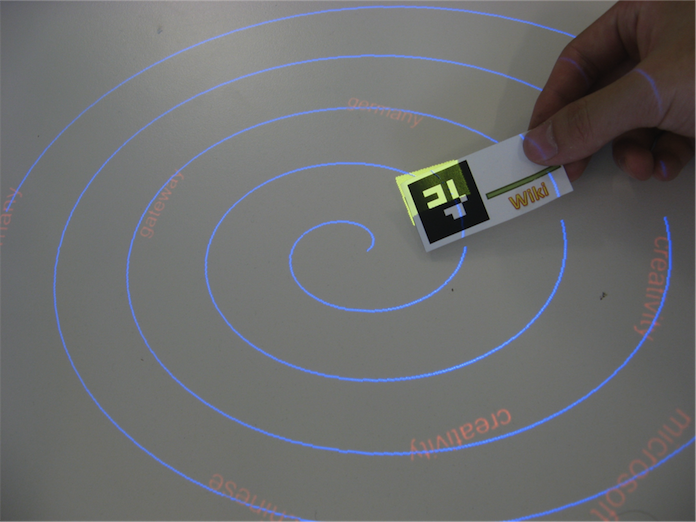

Interactive tabletop prototype: Spiral metaphor (2010)

As people talk in a conversation, the spoken keywords are recognised and presented in the interface. A recognised word then start rotating along the spiral, with their size shrinking over time. A user holding a Wiki/Google tag and intersect it with specific words, and the action will fire a corresponding Wiki/Google search, with results shown on a separate display. Upon reaching the center of the spiral, the word disappears. Relevance technologies : C++, OpenGL , OpenCV |

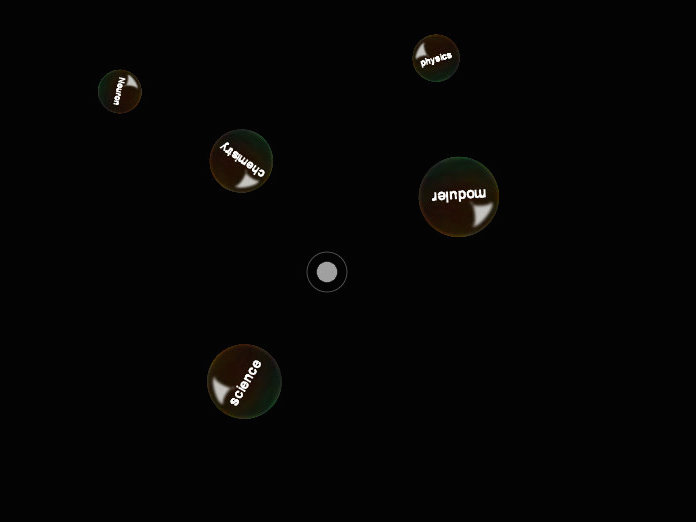

Interactive tabletop prototype: Bubble pipe metaphor (2011)

Similar to the strategy illustrated in the text to the left, this time recognised spoken words are presented in bubbles that are blowed from the mouse of the pipe in the middle of the tabletop display. The sizes of the bubbles are shrinking over time. Again, Wiki/Google tags can be used to pierce through a bubble in order to activate a search. Relevance technologies : Java, Mt4j Framework |

System-level prototypes

|

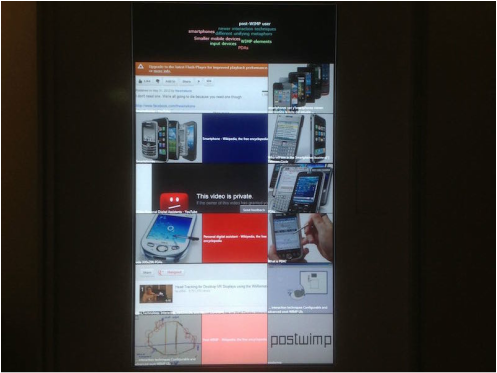

Conversation-aware Interactive Kiosk(2012)

This is a interactive kiosk system that could be deployed in a coffee room or besides a lunch table. Thanks to speech recognition technology, the system contains a microphone which constantly listens to the conversation over a coffee/lunch break, automatically grabs potentially interesting websites and show them on this vertical touch screen. In fact, it performs google image search and present image results in tiles (similar to that of Microsoft Windows 8 metro interface) as stimulus to provoke or facilitate conversation. Note that on the top of the display, there is a word cloud display. This display shows the keywords identified in the conversation. Keywords that are spoken more than once are made bigger. The layout of the words are automatically done with the help of a WordCloud generation algorithm. What is more, by clicking on the words in the cloud, the system would be navigated to a Web browser view which returns the result. Any image tiles is also clickable, navigating the users to the corresponding Website. Suppose you are talking about football with your colleagues, then this display would facilitate your conversation by providing with a bunch of enriched information about your football topic, such as the player, the teams you are talking about. Relevance technologies : C#, WPF |

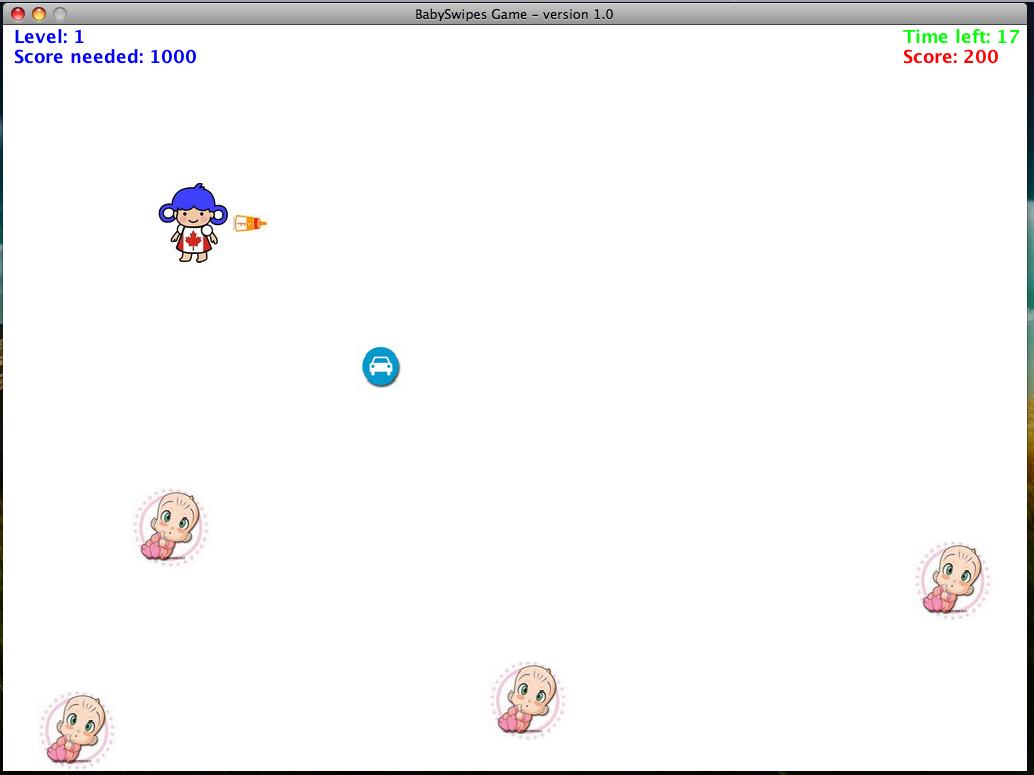

BabySwipe : A Speech-controlled 2D Game

This is a 2D game with very simple logic: the gamer controls the nanny(blue hair) to feed the babies with bottles of milk. The babies may appear at any position in the screen, but the nanny can also throw the bottle towards UP, DOWN, LEFT, and RIGHT directions. Meanwhile there are bonus (e.g. the car icon), by taking which the nanny can speed up. This logic sounds like the famous Gameboy game - "Battle city". But BabySwipe has an interesting feature : it allows you to control the avatar using your speech. By yelling out "Up","Down","LEFT","RIGHT" , they nanny moves towards the corresponding directions. By saying "Hey", the nanny then throws a bottle. It is not for researching purpose, it was just a game system prototype for fun as coursework. Relevance technologies : Java |

Web interface prototypes

|

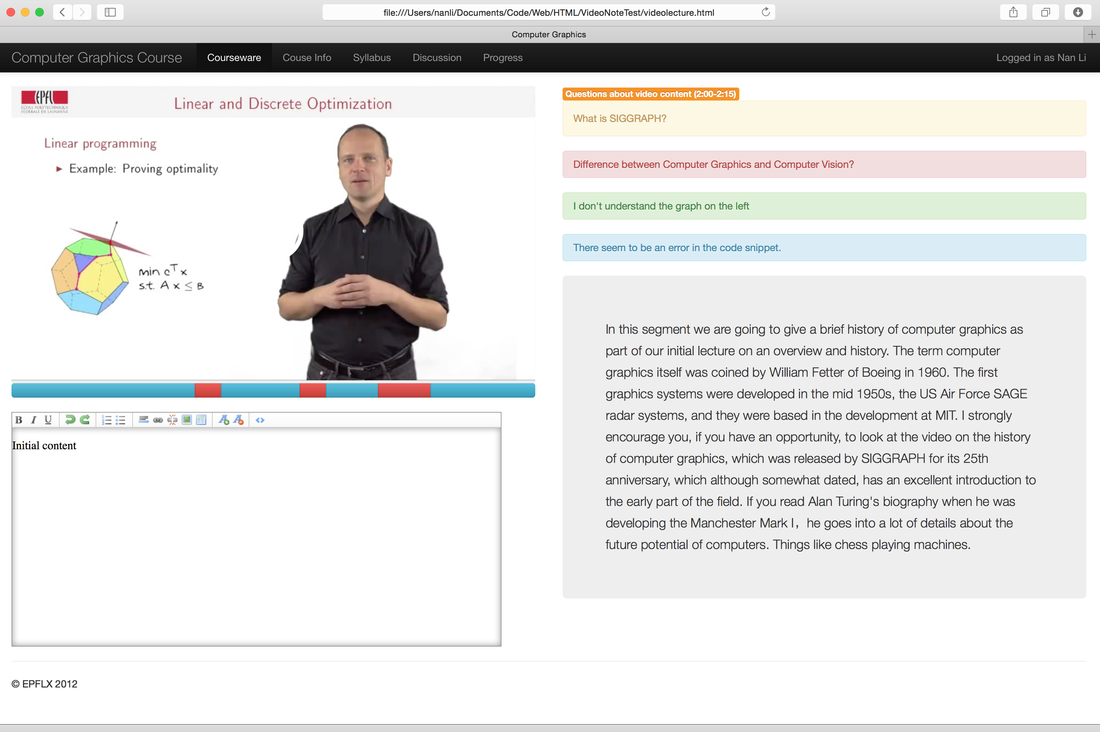

SlideWatch : Linking Videos with Lecture Slides (2012)

This is an early Web interface prototype for improving MOOC learning experiences. What I propose in this design is to add three additional features to enhance the current MOOC video viewing experiences: (1) A discussion systems that can be linked to video segments. Of course, when the video is played to that position, all comments are shown to a student. (2) Note taking feature which allows students to take notes while watching videos and save them in particular formats for future review. (3) Problem indicator (the red bar under the video). Based on how thousands of students interact with the same video, i.e. their seek-back navigations and pausing behaviours, the system automatically mark potentially difficult video segments, so that both students and teachers are aware of. The prototype also employs an innovative feature. When the users print the lecture slides. Each slide is attached with a unique fiduciary marker. Nowadays many people watch lecture videos with laptops, mobile phones or tablets, all of which featured with a camera. When a student is reviewing the slides and doubts, s/he can hold the printouts and let the camera to see the fiducial marker, then the video player is automatically redirects to the corresponding video. I made a short demo to many educators and they like the idea very much. Relevance technologies : HTML5, Javascript |

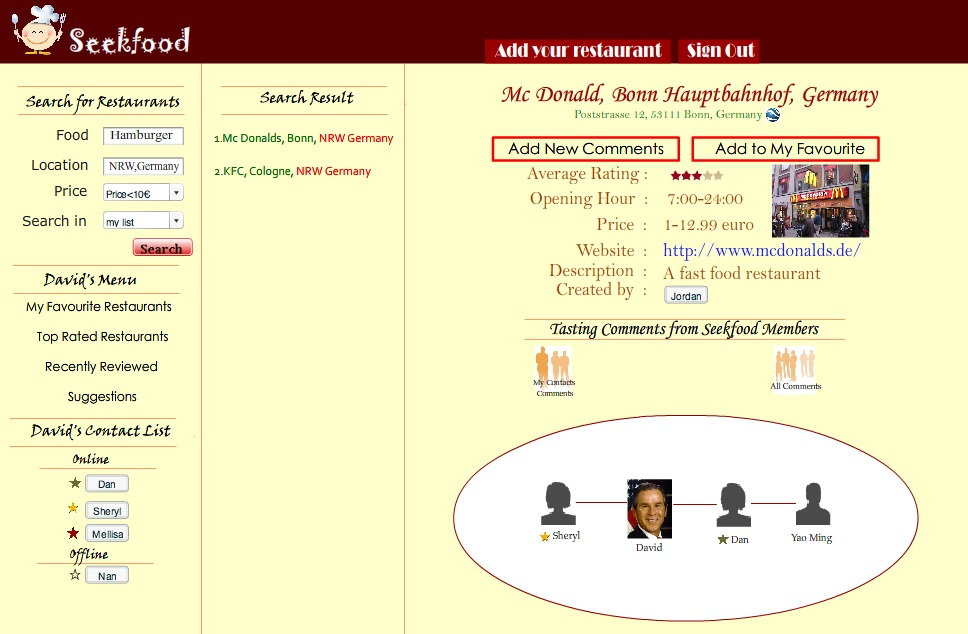

Seekfood : A Social Network for Restaurant Recommendation (2006)

When you visit somewhere, have you ever wondered about where to eat? Of course, nowadays there are lots of apps available on the Internet or mobile devices. However, when this prototype was made in 2006, there were no such services. This prototype was made through a design-implementation-evaluation cycle, from brainstorming, mind-mapping, storyboarding, paper prototyping, interviews to implementation. It was done as a course project, but it is amazing to see how this simple idea becomes real products by others. Relevance technologies : Flash only |

Mobile interface prototypes

|

GeoReminder : Reminder based on Geolocation (2007)

I don't have a picture of this system anymore. It was one of my "Praktikum" projects done at the Fraunhofer Institute IAIS in Germany. In 2007, smart phones like iPhone and Android devices were not on the market. So we were among the first to program an early prototype for reminding people to do something based on geographical locations. We use Bluetooth-enabled GPS and pocket PC for this prototype. Relevance technologies : Java |

UbiSafe : Configure Safety zone with Ubisense Tags (2007)

I don't have a picture of this system either. It was also one of my "Praktikum"projects done at the Fraunhofer Institute IAIS. The idea is to use several Ubisense tags (an indoor tracking system analogous to GPS for outdoor use) to configure a topological area, outside which one's computer is not functioning. Relevance technologies : Java |

Hardware prototypes

|

Infrared Glove for Multitouch

This is a simple DIY. I create a glove with infrared LEDs, switches and batteries. Whenever the users finger touches the surface, the switch is on, so the LED starts emitting light. An infrared tracker sees the light and fire TUIO events for multi-touch |

Carton Multitouch tabletop

I have built a multitouch table on my own with a carton box, a camera as well as pieces of white paper sheets. The above picture is not from my construction, but from someone else who attempted to do the same. I did not take a photo for my carton tabletop. |

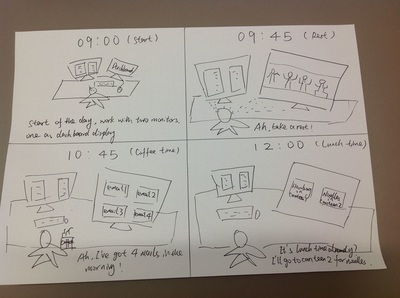

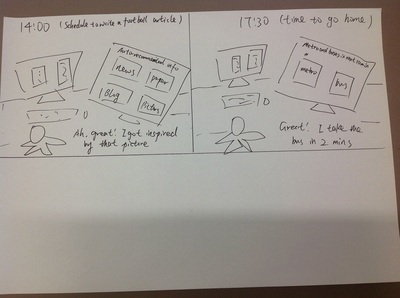

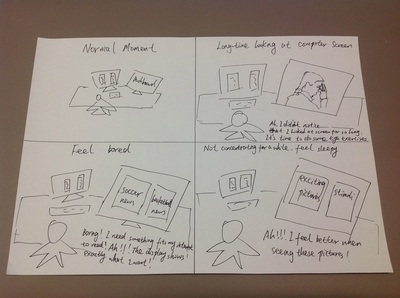

Storyboarding

Strictly speaking, this is not prototyping, because storyboarding happens earlier than the prototyping phase in interaction design. Anyway, I uploaded one of the "Starman" like storyboards I created years ago. This is an awareness system that aims at managing time and information for an office worker.